The Competence Paradox

The tools that make you better at the task also make you worse without the tool. This is not a theory. It is a documented phenomenon with a forty-year research trail, observed across every domain where AI or automation has been introduced.

The clearest clinical evidence to date comes from gastroenterology. A study by Budzyn and colleagues, published in The Lancet Gastroenterology & Hepatology in 2025, tracked physicians who worked with AI-assisted colonoscopy systems. After twelve months, the physicians detected fewer polyps when working without AI than they did before AI assistance was introduced. The tool improved the system. The tool degraded the human.

The pattern extends well beyond medicine. In aviation, NASA and FAA reports document a persistent finding: pilots who rely on autopilot systems for extended periods show measurable degradation in manual flying skills. In software engineering, a GitClear analysis found that code churn doubled between 2022 and 2024, correlating directly with AI coding tool adoption.

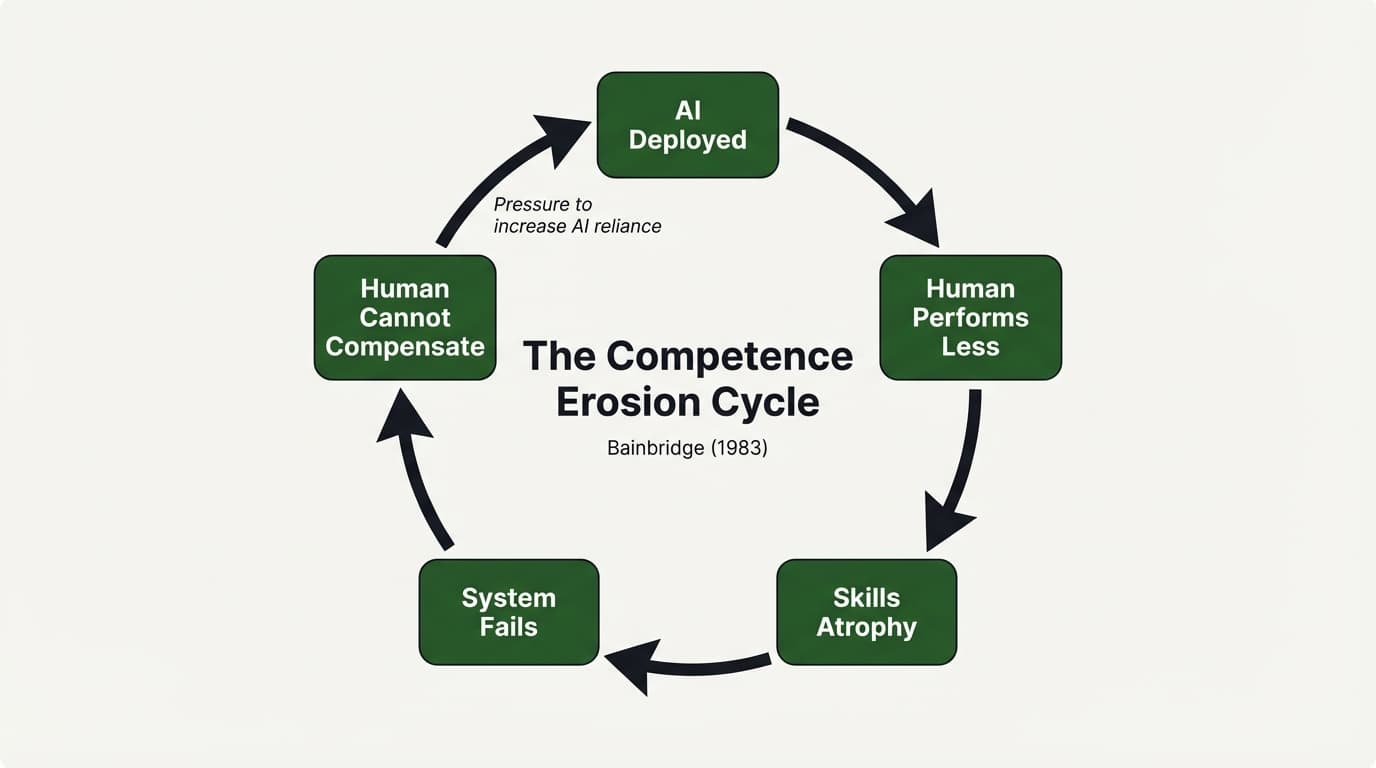

Lisanne Bainbridge identified the dynamic in 1983, in a paper titled "Ironies of Automation" that has since accumulated over 4,700 citations. Bainbridge demonstrated that the more reliable an automated system becomes, the less skilled its human operators become at detecting when the system fails.

The erosion cycle is self-reinforcing. AI assistance reduces the need for manual practice. Reduced practice degrades the underlying skill. Degraded skill increases dependence on the tool. And increased dependence further reduces practice. The loop tightens with every iteration.

Thirty Years Is Too Long

The executive survey data is unambiguous. In February 2026, a Fortune and NBER study of nearly six thousand executives found that ninety percent reported no measurable productivity impact from their AI investments. Not negative impact — no impact. The most widely deployed technology of the decade is, by its own users' assessment, producing nothing.

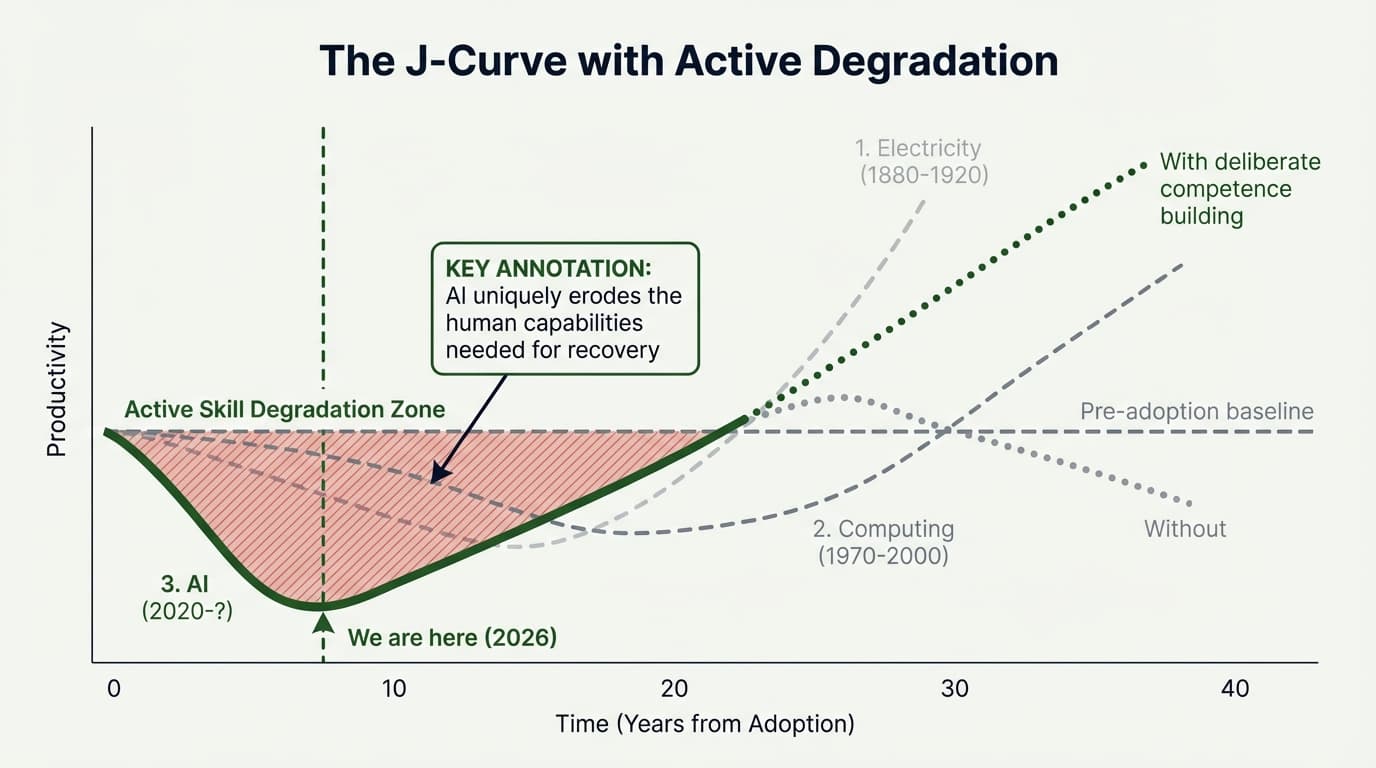

This is the AI version of a pattern that has played out before. The economic historian Paul David demonstrated in a 1990 paper that electricity took over thirty years to produce its promised productivity gains — not because the technology was slow, but because organizations had to rebuild their physical plants, management practices, and workforce skills before the potential could be realized.

Robert Solow captured the same lag for computing in 1987: "You can see the computer age everywhere but in the productivity statistics." Erik Brynjolfsson's J-Curve framework explains the mechanism. When organizations adopt a transformative technology, measured productivity initially declines because the real gains require costly, time-consuming investments in organizational redesign and human capital.

But there is a critical difference between the AI transition and every previous technology transition. Electricity did not make factory workers forget how to use their hands. Computing did not degrade accountants' ability to reason about numbers. AI actively erodes the competencies it automates. The J-Curve is deeper than it has ever been.

The organizations that understand this — that the AI transition requires not just new technology but deliberate investment in human competence — will not wait thirty years. They will reorganize now, while the competence still exists to preserve.

The TwinLadder

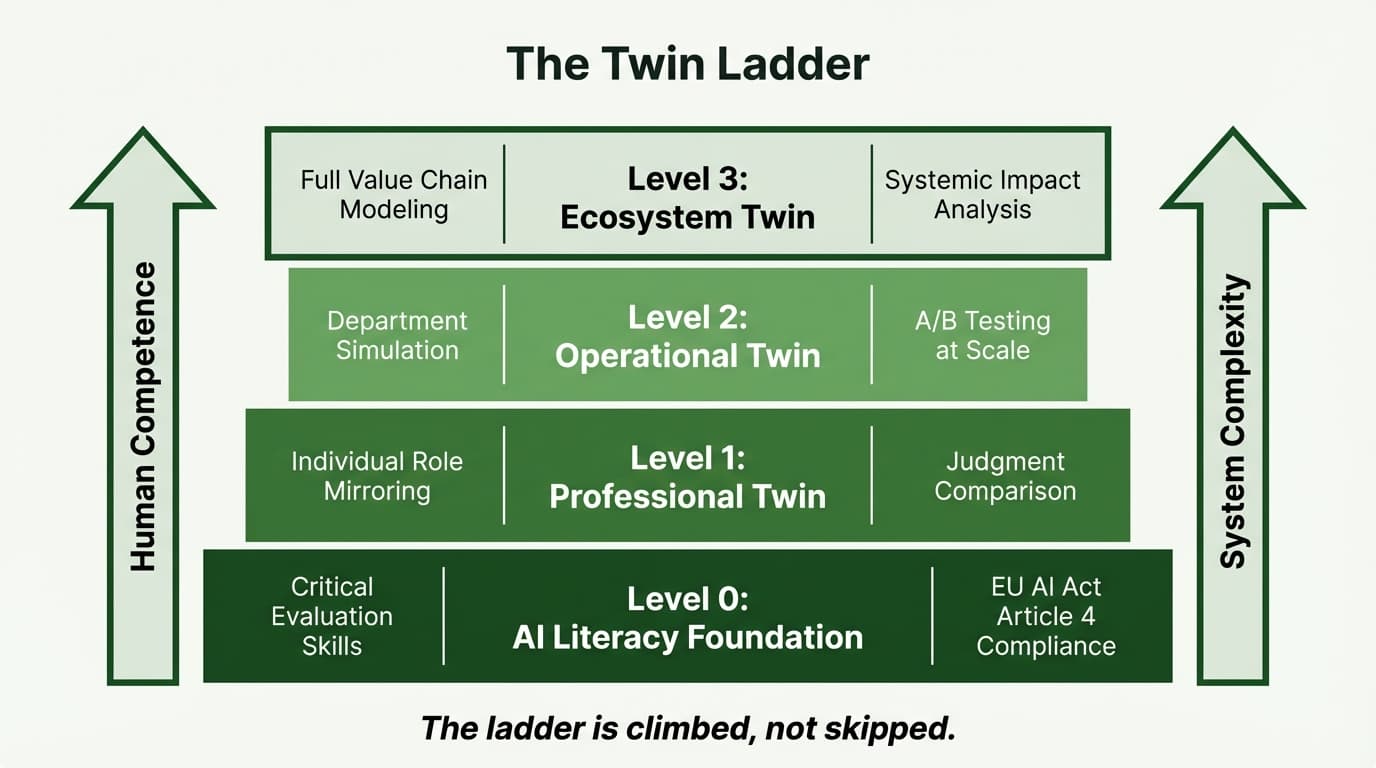

The TwinLadder is a four-level progression for building AI competence from the individual to the ecosystem. It addresses the competence paradox not by slowing AI adoption, but by ensuring that the humans working alongside AI maintain and deepen their professional judgment.

The On-Ramp

The baseline ability to critically evaluate AI output. This is where everything begins, and without it, every subsequent level is built on sand. Can your people tell the difference between good output and confident nonsense?

Maps directly to Article 4 of the EU AI Act, which requires "sufficient AI literacy" for all staff interacting with AI systems. Enforcement begins August 2026.

Mirror, Compare, Grow

An AI agent mirrors an individual role — not for replacement, but for comparison. The professional sees what the AI produces for their specific domain and learns from the delta between their judgment and the machine's output.

The prediction-first interface: the professional makes their own assessment before seeing the AI's recommendation. The learning is in the delta.

Test Before You Commit

Digital replicas of departments, supply chains, or business units. Organizations can A/B test alternative configurations before committing resources. The critical discipline is ensuring decision-makers understand why one approach outperforms another, not merely that it does.

Shape the Rules

Multi-entity AI agents representing regulatory environments, market dynamics, and competitive ecosystems. For sectors like energy, finance, and healthcare where regulations co-evolve with the technology they regulate.

The Science: Desirable Difficulties

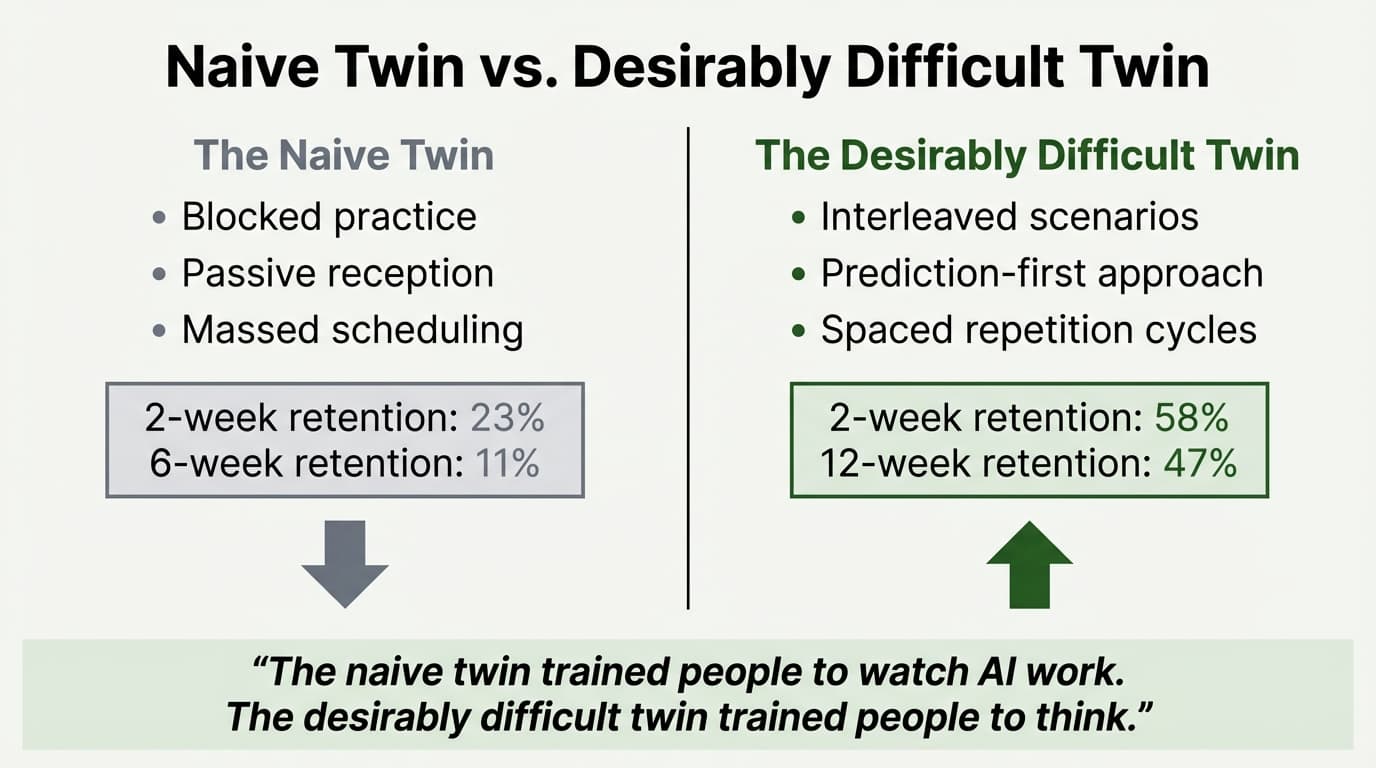

Robert Bjork's research on desirable difficulties is among the most replicated findings in cognitive science. The core insight is counterintuitive: learning conditions that make performance worse during training make performance better in the long run. The discomfort is the mechanism, not a bug to be optimized away.

Anders Ericsson's decades of research on deliberate practice tells the same story from a different angle. Expertise requires sustained, active engagement with progressively difficult tasks — tasks that stretch current ability rather than confirm it.

AI systematically removes the difficulty from professional work. It removes the friction, the struggle, the repeated failure that consolidates understanding. In doing so, it removes the mechanism by which expertise has always been built.

Trained people to watch AI work.

Trained people to think alongside AI.

This is the design principle behind every TwinLadder engagement: preserve desirable difficulties even as AI handles the routine work.

Where Does Your Organization Stand?

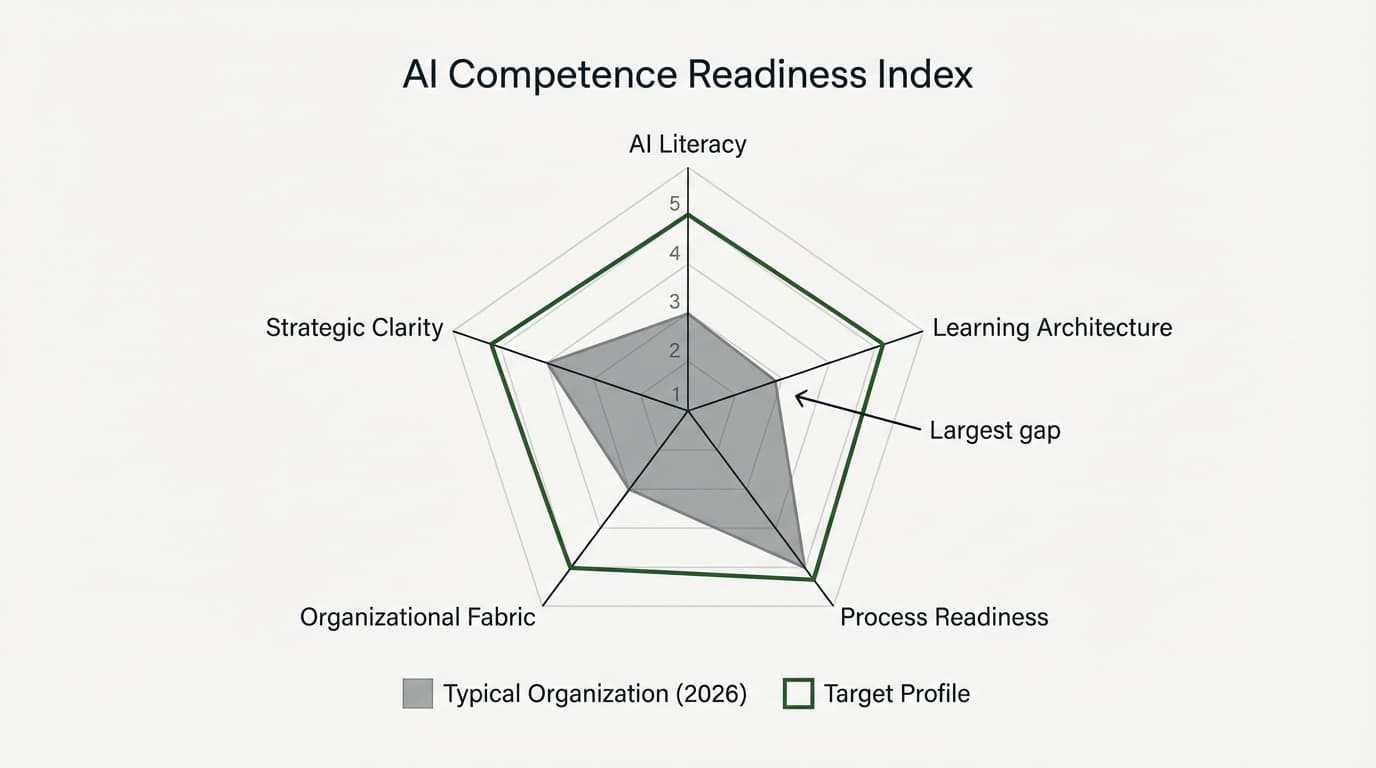

The AI Competence Readiness Index measures five dimensions of organizational preparedness. Not your ability to deploy AI tools — most organizations have that — but your capacity to develop the human competence to use them well.

Most organizations currently score between 8 and 14 out of 25. The typical profile is telling: moderate scores on AI Literacy and Process Readiness, but low scores on Learning Infrastructure and Competence Preservation — the dimensions that matter most for long-term capability.

Here is a test you can run this week. Ask five people on your team to evaluate the same piece of AI-generated work — a report, a recommendation, a draft analysis. Do not tell them it is AI-generated. Ask them to assess its quality, identify its weaknesses, and decide whether to act on it. If the variance in their assessments is low and the quality of their critiques is high, your organization is further along than most. If the variance is high and the critiques are shallow, that is your readiness score.

What To Do Monday Morning

Three actions. One day. No budget required. No technology. No external consultant. These are diagnostic tools, not solutions — but they will tell you more about your organization's actual AI readiness than any vendor assessment.

Test Your Team's Ability to Evaluate AI Output

Ask five people on your team to evaluate the same piece of AI-generated work — a report, a recommendation, a draft analysis. Do not tell them it is AI-generated. Ask them to assess its quality, identify its weaknesses, and decide whether to act on it. The variance in their responses tells you more than any readiness survey.

Map One AI-Changed Process and Check for Judgment Erosion

Identify one process that AI has materially changed in the past twelve months. Find the people who used to make the key judgment calls in that process. Ask them directly: do you still understand why the system makes the decisions it makes? Would you know if it was wrong? How would you know?

Count the Interactions That Disappeared

Determine how many cross-functional meetings, informal check-ins, or collaborative sessions have been replaced by automated reports, AI summaries, or dashboards in the past six months. Ask whether the information quality improved or whether something was lost in translation.

These three actions require no budget, no technology, and no external consultant. They require only the willingness to ask uncomfortable questions and the honesty to hear the answers.

EU AI Act, Article 4

Article 4 of the EU AI Act entered into force in February 2025. Enforcement begins August 2026. The provision requires a "sufficient level of AI literacy" for all staff interacting with AI systems — a deliberately broad mandate that encompasses virtually every knowledge worker in every organization deploying AI tools.

Level 0 of the TwinLadder — the AI Literacy Foundation — maps directly to substantive Article 4 compliance. Not because compliance was the design goal, but because the underlying requirement is the same: people who work with AI systems must understand what those systems can do, what they cannot do, and how to evaluate their outputs critically.

Start a conversation.

What happens to human competence when AI handles the work that built it. That is the question we are working on. If you are a leader trying to figure out what that means for your organization — not theoretically, but practically — we would like to hear from you.

AI Competence for Organizations

A comprehensive guide to building organisation-wide AI competence beyond Article 4 compliance.

Download PDFAI Competence for Legal Professionals

How legal teams can build systematic AI competence while managing professional liability.

Download PDFAI Competence for HR Professionals

Coming soon — preparing your workforce for AI transformation.

Coming Soon